Today I will be live-blogging from the Tech@LEAD conference (Technology for Cultural Inclusion) at the Kennedy Center in DC. I hope to be a conduit for your participation in the event–please let me know about things you would like me to ask presenters, or report on from the sessions either using the comment section, below, or by tweeting to the attention of @futureofmuseums with the tag #TechLead2013.

You can check out the program at the conference website. This pilot event is bringing together people from arts, education, design, exhibition, media, IT, mobile development, etc., to explore how the design and application of technologies can support inclusion of people with disabilities in cultural experiences.

Here are the guiding questions for the day:

- More technology in cultural and natural history institutions means more challenges and more opportunities for accessibility – what should we do about that?

- What technologies, tools, models and techniques from the wider world can we apply to be more fully inclusive?

- How can we focus the development and proliferation of new and existing technologies to be inherently accessible and inclusive?

- New visitor experiences are coming – what should we do to get ready for them?

My questions for you (answers via tweet or comments):

1) what challenges does your museum face in being accessible to people with disabilities?

2) can you share stories of new tech (or new applications of old tech) that helps support inclusion?

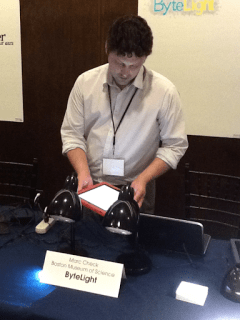

Boston Museum of Science’s Ben Wilson shows me the Bytelight system: creates an indoor positioning system with 1 ft accuracy, enabling people to use their assistive personal electronic devices to access highly localized content.

Will Mayo talks about SpokenLayer, a service which makes published materials accessible within 24 min by professional readers. Find that 62% of users listen all the way through the recorded material. The New Republic is now making all articles available this way. Will points out that anyone with their eyes engaged in another task (driving, exercising) is “functionally blind” when it comes to written text.

Artist Halsey Burgund performs his “Patient Translations” piece created with Roundware, a “flexible, distributed framework which collects, stores, organizes and re-presents audio content. Basically, it lets you collect audio from anyone with a smartphone or web access, upload it to a central repository along with its metadata and then filter it and play it back collectively in continuous audio streams.” Hmmm, sound stream of commentary from people in your museum’s galleries?

@nealstimler of the @MetMuseum demos Google Glass

What applications do you see for Google Glass (or its competitors) in museums?

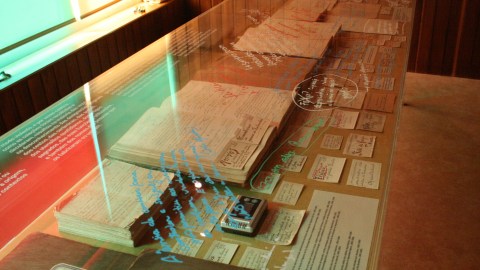

John Tobiason of the National Park Service shows off their accessible tour apps, including the kiosk version.

John says he & his colleagues were surprised at how many people are using these apps onsite for navigation & accessibility, not just interpretation.

Annuska Perkins explains wearable tech: Project Blinkie Blanket. A smart e-textile driven by LilyPad Arduino microcontrollers. Proximity, touch, temperature can all be inputs to the Internet of things, triggering useful actions. On a wheelchair, for example, sensors could contribute to crowdsourced accessibility maps.

Talking to Amanda Cachia about museums exploring images of disability through art. Check out her exhibit What Can a Body Do? Speaking of accessibility, the media section of the exhibits website has audio recordings of all the catalog texts.

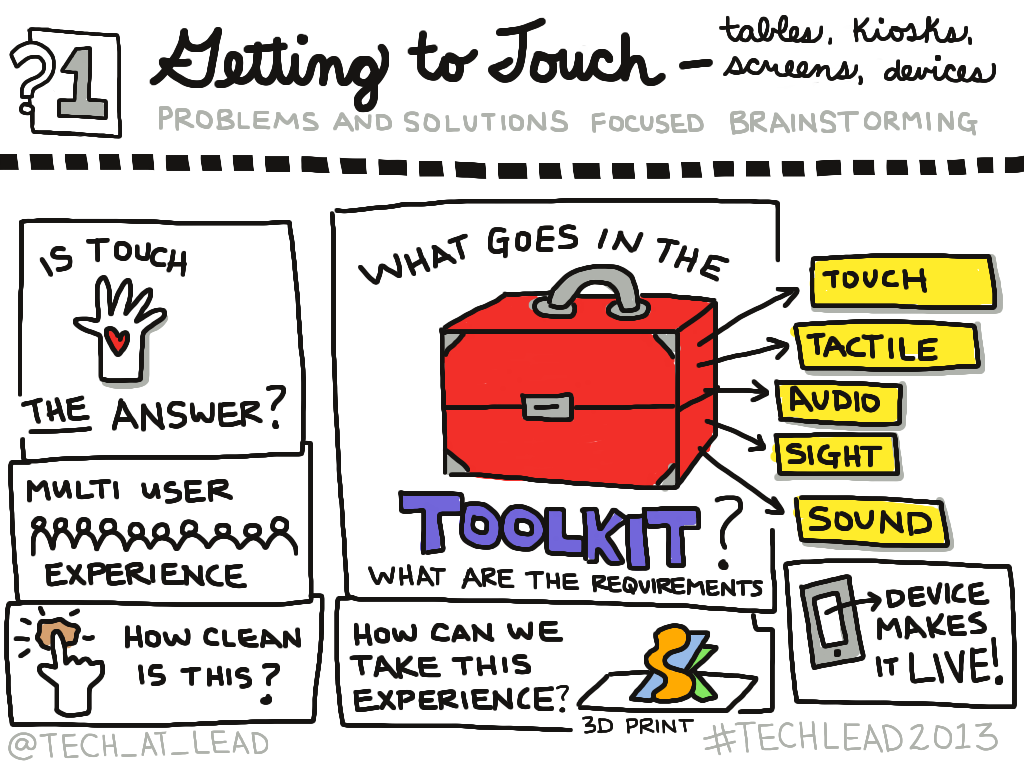

You can view more notes from the day, including some wonderful real-time “doodles” like the one below at the Tech@LEAD website.