Immersive technologies such as augmented reality (AR), virtual reality (VR), mixed reality (MR), and cross reality (XR) are quickly gaining momentum as a mass media tool deployable in all areas of industry and society, which has significant potential to impact how we interact with our world, and even to transform our understanding of it.

For those unfamiliar with any or all of these terms, here are the distinctions: Virtual reality involves complete immersion in the designed virtual space. Augmented reality, on the other hand, depends on the user staying connected to physical place and space to achieve the intended experience and impact. In the augmented reality ecosystem, information is superimposed over the physical world, creating an extra layer of interactivity as virtual objects and content appear within a spatial context. In mixed reality, the real is merged with the virtual to produce new immersive environments. Finally, cross reality is the emerging umbrella term for describing all of the computer vision technologies, or for describing tools that incorporate more than one.

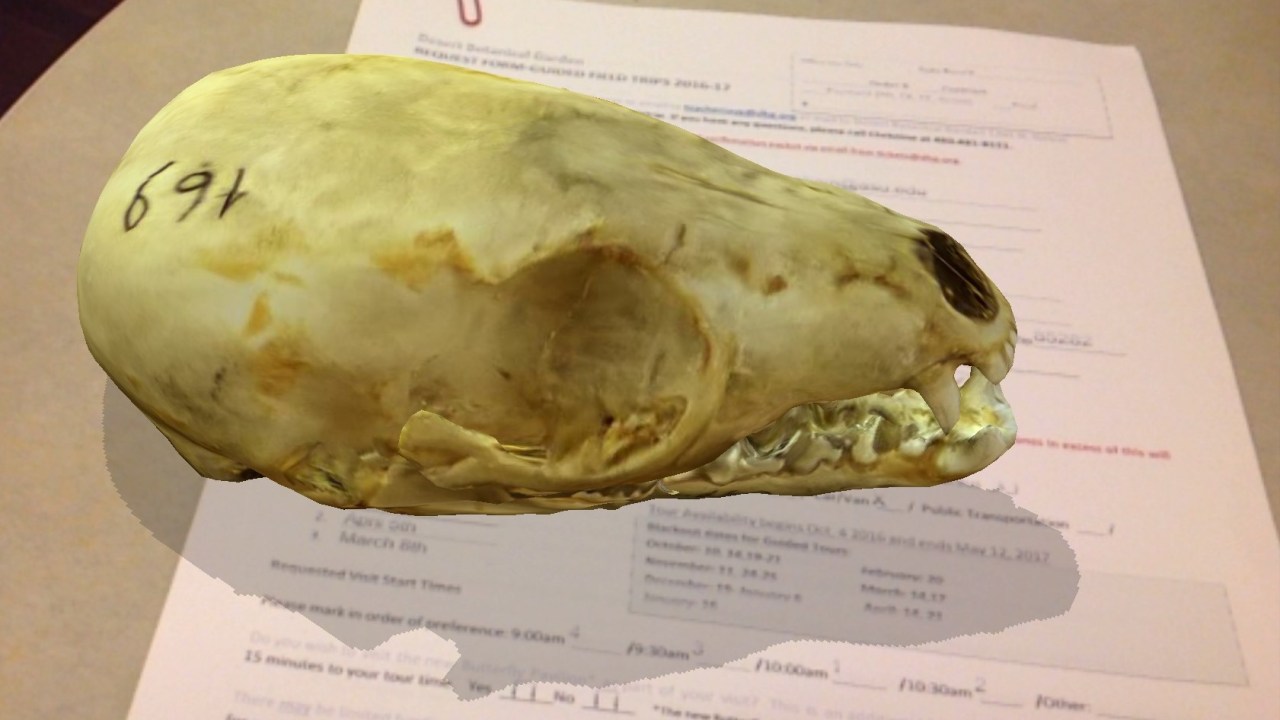

Object-based pedagogy and constructivism have been the predominant foundations of learning within the museum education field since its founding. But it stands to reason that these new technologies now have the potential to serve as a virtual extension of the physical object, providing deeper learning opportunities. While the physical artifact or object will always be the “real” thing, there are some significant drawbacks and barriers that limit how visitors can engage with these materials. Having worked in education and outreach in a university-based natural history collection for almost six years, I have encountered these barriers frequently. The most obvious is the “hands off” policy, made due to the fragility of natural history specimens. These specimens might also be pressed or dried, like botanical samples, making it difficult for visitors to fully appreciate their true anatomy and morphology. Fossils often bring up questions as to how the organism looked in life. These are all issues that can potentially be solved by XR technologies, which some organizations have been working to do.

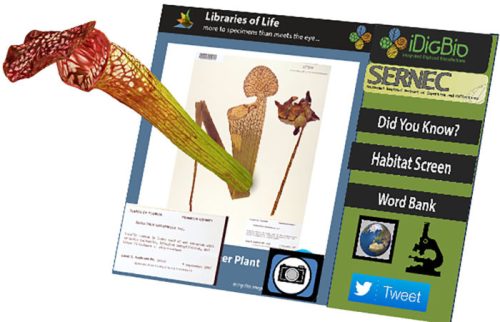

To pursue the potential uses of augmented reality for natural history collections, iDigBio (Advancing Digitization of Biodiversity Collections program), which is funded by the National Science Foundation and based at University of Florida and Florida State University, partnered with a development team at Arizona State University to develop a prototype called Libraries of Life. The app has reached a global audience, and further development continues under co-developer Austin Mast, a research botanist from Florida State University, and ExplorMor Labs (an Arizona State University technology spin-off).

The app has become a collaborative augmented reality platform for natural history museums and nonprofits who are involved in biodiversity and conservation education and outreach. For instance, the Florida Museum of Natural History used the platform to promote a public campaign on endangered butterflies such as the Miami Blue, via augmented reality craft beer labels, some of which were distributed at restaurants in Disney’s Animal Kingdom theme park.

Users activate the augmented reality experience by scanning target images, which can appear on collection cards, floor graphics, stickers, and even tattoos at outreach events and exhibitions, allowing the public to interact with the specimens. Target images can also be enlarged, allowing participants in one example to engage with a larger-than-life praying mantis, to walk around it, or take their picture standing next to it.

The app uses photogrammetry techniques to create 3D models of specimens such as insects, plants, and fossils which were contributed from some fifteen collection groups that make up the iDigBio network. The resulting models are stored in a cloud repository, and on recognition of the target image the specimen models and augmented reality scene are launched on the end user’s device. This 3D repository offers anyone with a mobile device access to collections, which will pave the way for new case uses as the virtual and physical worlds become intertwined. This movement is in parallel with the current massive efforts of museums to digitize collections in both 2D and 3D digital formats. The resulting models are increasingly available on platforms such as Sketchfab, effectively creating online virtual museums. For example, some of the models used in Libraries of Life can be found here.

With the wide availability of AR developer software like Apple’s ARKit and Google’s ARCore SDK, and with many people now owning mobile devices with built-in AR viewers, we will likely see an increase in the integration of this technology on a massive scale. AR-ready devices will allow museum visitors to easily engage with content in space and place, with or without target images or the need to download apps. This is especially great news for museums, since the biggest current barrier in the use of augmented reality in museums is probably the need to download an app.

If you are involved in the emerging technology world you already know that things don’t stay the same very long. The augmented reality ecosystem is about to shift again, with an offshoot called WebAR. With the infrastructure of WebAR, the virtual museum of the near future will be one in which you can search for a collection online, pull objects from it on the cloud, gather data on those objects, and engage with them in physical space, all through a web browser. With the emergence of haptic technologies combined with mixed reality, users will not only be able to view and interact with the object in front of them, but also to simulate touching it. Haptic technology offers some amazing possibilities currently being explored with companies such as Ultrahaptics. Their technology uses ultrasound to create three-dimensional shapes and textures which can’t be seen but can be felt. The potential of this technology to improve the accessibility of museum collections for visitors who are visually impaired is especially promising. Moreover, the emergence of technologies that incorporate all the senses with objects in a spatial context will undoubtedly push the barriers in how we all learn from and engage with collections.

As museums start to think outside of the proverbial collection box, augmented reality and computer vision technologies may well be the tool of choice in moving experiences beyond the physical object while facilitating deeper engagement and accessibility. With this, new best practices and communities of practice will arise, along with the need to answer new questions.

- To what degree can virtual objects take the place of physical objects?

- What role and impact might new immersive technologies have in cognition and learning?

- How might this technology motivate social change and promote public awareness of issues, and advance the important role collections have in understanding our world?

While the landscape of emerging technologies unfolds, there is perhaps no better time to explore how these technologies might democratize the museum and move us beyond the collection box. This is a real opportunity to change the dynamics of how our audiences understand and engage with collections and the world around them.

Libraries of Life is soon releasing a new version of its app that categorizes collections based on taxonomic groups. Look out for the forthcoming Android and iOS app releases in the coming weeks. Follow us at @ExplormorLabs for more!

Acknowledgements:

A special thanks to iDigBio, the Florida Museum of Natural History, Austin Mast (FSU), Adam Chmurzynski (app development), the Virginia M. Ullman Foundation, Nico Franz (Arizona Natural History Collections), and to all of our contributors and sponsors who have supported this effort.

About the author:

Anne Basham is Project Director of the Libraries of Life Project and Founder of ExplorMor Labs. Anne received her M.Phil degree in Museum Studies from Cambridge University and a doctorate in Educational Leadership and Innovation at Arizona State University. Her research interests are in the learning and cognitive sciences as it relates to collections, new technologies, and the role of the “social museum” in bridging the gap between collections and community. For the past six years Anne served as Education and Outreach Coordinator at the Arizona State University Natural History Collections.