Watch this recorded CARE-apy session where we explore numerous evaluation methods that you can use to understand a variety of things, including:

- Information that will help you make decisions before you develop and implement a program or project

- Information and feedback loops to support you as you develop your program or project

- The extent to which goals, objectives, and intended and unintended outcomes are achieved

- Strengths and weakness of individual, team, organizational, and community processes

While surveys will be a topic of discussion, we will go beyond this “go to” method, and discuss the benefits and challenges of a variety of other quantitative and qualitative methods such as interviews, observations, focus groups, voting, feedback gathering, and journaling. This session will explore why it’s important to match methods and processes with key evaluation questions. Participants will experience various methods, live, in this CARE-apy.

Transcript

Ann Atwood:

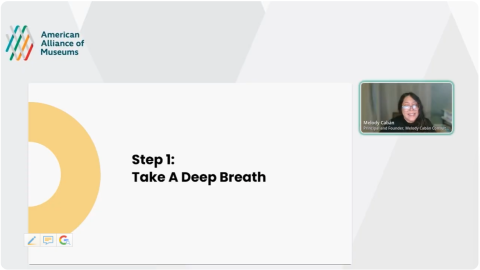

Today, we’ll be talking about Discussing Data Collection Methods. This webinar is produced by the Committee on Audience Research and Evaluation, one of the American Alliance of Museums professional networks. CARE-apy webinars are intended to connect our members and share professional expertise. My name is Ann Atwood with the Museum of Science in Boston, Massachusetts, and today we’ll be talking about Data Collection Methods with Sarah Cohn from Aurora Consulting and Marley Steele-Inama from the Denver Zoo.

This is the second webinar in our Getting Started With Evaluation series. If you’re interested in joining us for the third part of the series on analyzing data for action, I’ll add the link for that event in the chat in just a moment. But first, I’d like to give a few quick housekeeping items before we start.

Today, we’re recording our session and we’ll have time during the presentation for question and answer. In the meantime, since we are a large group, I’ll keep you all on mute. If you have any questions during the presentation, please enter them into the chat and we’ll address them during, and after the conversation. We do plan to make this webinar available on AAM’s YouTube, and it should be available in three to six weeks. These webinars are kept free of charge. So, we’d like to encourage you to consider membership with the American Alliance of Museums. With that, I’ll turn it over to Sarah and Marley. Thank you for presenting today. Sarah, Marley.

Marley Steele-Inama:

Thanks, Anne.

Sarah Cohn:

Thanks so much, Anne. Yeah, and it was great to see you all, either photos of you or your names, or… Hey, Heather in a mask. Good to see you on video. Feel free to keep videos deactivated while you’re eating. Although I ate in front of the team as we are getting on early, or turn your video on and join us. We’re hoping for this to be a bit of a conversation as well as more of a presentation. So, we are totally flexible with whatever your needs are.

I’m going to start us off with actually sharing some slides. We are thinking about these slides as both moving us through our conversation, as well as being a resource for you all at the end. You’ll see, as we go through our time together that there are various links to download or to connect out to a different website for some of the resources that we’re talking about. Towards the end, we will have an activity built into the slides. So, you all have editing privileges. Be careful what you click or what you do. If anything happens, of course, we can figure out how to bring it all back, but just wanted to share all that with you.

We’ll start with just introducing ourselves really briefly. My name is Sarah Cohn. I use they, them or she, her pronouns. I am still in Dakota land in Minneapolis, Minnesota, and I’m with Aurora Consulting. I do consulting around evaluation and other strategic planning, nonprofit related efforts with museums nationally, and then nonprofits here in the Twin Cities.

I was previously at the Science Museum of Minnesota for 10 years. I’m a past chair of CARE, and I am a member of the Professional Networks Council, which is all 20 plus professional networks, CARE, which is one of them that we meet and think about how do we support our members through lots of crosscutting conversations? If you’re members of other PN’s welcome. Marley.

Marley Steele-Inama:

Yeah. Thanks, Sarah. Hello, good afternoon, good morning wherever you’re at. Welcome to this session. Sarah and I really excited. Sarah and I have known each other for 12 years plus, who knows at this point.

Sarah Cohn:

Definitely we met.

Marley Steele-Inama:

You’ll see that Sarah and I are friends and we’ve had the opportunity to work together for a long time in CARE. I too was a past chair of the Committee on Audience Research and Evaluation, and now I serve in a variety of different capacities with the leadership team.

My name is Marley Steele-Inama. I use she, her pronouns. I work at Denver Zoo. For about three more days, I will be serving as the Director of Community Research and Evaluation, and officially, on Monday, I transition into a new role for the organization, which is going to be the Director of Community Engagement. But I am still going to be staying very connected with CARE. I’m also part of the Social Science Research and Evaluation group of the Association of Zoos and Aquariums as well. So many acronyms.

But I’m really excited to take everything that I’ve learned in the past 12 or so years that I’ve been doing research and evaluation at Denver Zoo and applying it into this new role with community engagement. Because essentially evaluation is community engagement. We are getting input, we’re getting insights. We’re getting feedback from our communities. Whether it’s on a guest experience, it’s informing a program development, it’s evaluating an evaluation or program, it’s still community engagement. I’m really excited to move into that new opportunity.

For those who have just joined a moment ago, our colleague, Ann at Museum of Science in Boston is going to be resubmitting the Google slide deck, or Sarah just did it too. For those that are just joining, I know that when you come in late, you can’t see the chat prior there. If you need it reposted, just throw it in the chat and we’ll make sure that we get it put in there.

As a reminder, we do have in the chat, this Google slide deck that we’re going to be referencing on and off throughout the conversation. We may say, on slide five, this is what I’m talking about. It’s completely okay if you don’t have access to it, if you don’t have dual screens or you don’t want to bounce back and forth, we’re going to be completely flexible.

What we really wanted to do is, this is the second of a three part series that CARE-apy is offering. The first one, if anybody was there was a few weeks ago and it was facilitated by a couple of our colleagues, Emily Craig who’s with LACMA and then Kerry Carlin-Morgan who’s with Oregon Coast Aquarium. They spent the hour webinar talking through a lot of ideas around how to craft questions when you are administering surveys or interviews with your community members.

Sarah and I decided to take a little bit of approach and we’re going to step back just a moment and go real high level around thinking about evaluation as a process, and then we’re going to move into what we’ve promised in this session, which is going to be exploring methods. The reason we’re doing that is because selecting methods is super contextual, based on that higher level stuff. It’s based on what are your goals? What are your questions? What are your needs? Who or where are you going to gather data? What’s the amount of contact you may have with an audience that you’re gathering insights from or feedback from, or potentially you’re trying to understand what they gained out of and experience. All of that is used to inform which method.

Our real goal today is to get us thinking about, not just immediately jumping to the methods that we’re comfortable with, or we know exist, which are like these surveys or interviews, maybe observations, focus groups, and really starting to lean more into this idea of taking the higher level, all that other input before deciding what those are going to be.

But before we jump into that, Ann is going to share a poll with you all, and we’d like for you to select… It is a multiple choice, but if you feel like there’s one that really resonates, you can select that one. Once it gets posted, I will read the question and the responses. You should hopefully all see this now up on your screen. The question is, when you think about starting or including an evaluation in your process, which of these, you can select one or all, do you subscribe to?

Do you think about it when you’re pursuing an idea? Do you think about evaluation when you’re designing or developing your idea? Do you think about it when you’ve finished building and you’re ready for visitors or a community to engage with that idea? Or do you think about evaluation when everything’s done and, oh my gosh, we need to report it out? We need report something out. Then there’s a last option, which is none of these and its completely fine, if you select that one as well. We’ll keep this poll open for probably 10 more seconds and then we’ll share the results with everyone.

Sarah Cohn:

If anybody does check none of these, I’d love it, if you’re willing, to put into chat when it is you do start thinking about including evaluation or when does it come up? Maybe I should evaluate, maybe I should gather some kind of data from somewhere other than my own brain.

Marley Steele-Inama:

All right. I believe Ann is going to be sharing the results here shortly.

Sarah Cohn:

I think everyone should see them. It looks like 43% of us said, when I’m thinking about whether to pursue an idea. Like I have a new idea, is it worth moving forward with? Over half of us said, when I’m designing or developing it. As I’m thinking about my marketing or my new way of having team meetings or a new program or exhibit, 50% of us said when I’m done. It’s built and I’m ready to take it out and share it with others.

Then almost a third of us said, when everything’s done and I need to report on my results to my funder, to my manager, whomever. Then a few of us, 2% said none of these. Julie, I see that you put in the chat, I think it depends on project size. Absolutely, yeah, when does it make sense to incorporate evaluation into a process, into our various efforts, and all of the things that we need to keep track of. Fantastic. Well, thank you so much for sharing that.

Marley Steele-Inama:

Thank you.

Sarah Cohn:

Yeah, I’m going to close that so I can see. I’ll just say that I think Marley and I both think about evaluation as being able to support any and all phases of our work. Whether that work is preparing for a team discussion, reviewing a budget, distributing communications or marketing materials or considering a visitor’s overall experience after they visit our institutions.

We think about evaluation in a pretty broad way. If we have a question that we can’t answer on our own and we’re going to go out and try to find answers, find ideas, perspectives from somewhere else, we think about that as potentially some level of evaluation. Whether it’s tiny or whether it’s going to take a lot of time. We’re going to share a basic framework that we both use for making evaluations useful to our work, that helps us get to thinking about the right methods for each project.

What we’re also going to do, as you see in the slides and we can… Thanks Marley for throwing that back into chat is also build on existing resources. Because what is out there is a fantastic starting place and there’s no need for us to reinvent the wheel, if you will, or try to build everything from scratch when we can take the great ideas that Ann has, that Marley has, that Sabrina has and build on those and make them specific to our institution or to our own projects or efforts needs.

The first one that I’m going to suggest is on slide three, it’s called team-based inquiry. This is a fantastic resource for considering and building upon evaluation within our organizations. This material that you all can link to you, clicking on all materials can be found at nicenet.org. Is both a book, a packet, as well as a bunch of sample instruments and forms, and we’ll actually talk through a couple of them today, as we think about how do we get to identifying the right method for our work.

Team-based inquiry is really about thinking about how do we make evaluation, formal evaluation, capital E, whatever you want to call it, right size it for all of us working in museums, zoos, aquariums, institutions across the country. How do we frame it so that we think more, we’re able to incorporate it on the fly as we need it, as we ask questions and we, or our teams can’t answer them and we need to get information from others. Do you want to talk about how we’ve expanded on it, Marley?

Marley Steele-Inama:

Yep. I was just adding the slides back in. Anytime anybody needs access to the slides, you could direct message Sarah or me or put it into the chat and we’ll get it out there to you.

Sarah Cohn:

Thank you.

Marley Steele-Inama:

On slide four is a little bit more of a real… I think it’s a tangible, simple way of thinking about the cycle of evaluation, as these four big buckets of phases. The first thing we always start off with, anytime my colleague, Nick Fisher, who’s on this call as well, anytime he and I go into a meeting and people say they want to do an evaluation. The first questions we ask is, “Well, what do you want to know?”

It’s really at that high level, not the, on a scale of one to 10, how do you feel about something? We really were like, what are we really trying to understand? That helps us understand, do we, do our colleagues, does the organization, do they want to know things related to results, changes, impact, those sorts of things. Are they more interested in a process and what’s working well and maybe what are challenges with that process? They may want to know about our ROI, our inputs really making a difference at the level that we’re putting them into the effort.

Sarah’s going to talk a little bit more about framing those as key inquiry questions. I think that’s a really critical way to frame those, because it’s easy to think of traditional training as we call those evaluation questions, but those can get a little mixed up with the questions you would put on a scale, on a survey. In fact, when, when my colleague, Nick and I have asked our colleagues here at the zoo to brainstorm evaluation questions, sometimes they do come up as, how likely are you to do this as a result of… I think, shifting that language to key inquiry questions, they don’t think about them in terms of what would that look like on a survey or in an interview? It’s really, what’s that big idea you’re trying to get at?

The questions inform the investigation, and that’s what we’re going to focus on here today is, how we collect the data to answer those questions. Sometimes it’s not people that are going to answer the question. Sometimes there’s other reports that exist. There’s other data that exists. There’s that secondary data to go get the information from, and then humans aren’t necessarily the subject or the source.

The next phase in the cycle is the reflection, which is really the key piece is, what’s the so what with the data we got from the methods that we’re utilizing? What are the themes that are emerging? What are some of the unique ideas and what’s the meaning behind them? How can we apply them? That last part is to actually apply the results for improvement.

I like to think of this cycle then as, how can you also combine this cycle, Venn diagram it with your program development cycle? Where can these phases fit in to all those different steps within your program development and implementation, because you can evaluate each piece of that whole program development cycle. You may have different questions within them, and you may use different methods within them. I think we had a few folks that in the chat, earlier too.

Sarah Cohn:

Totally. When we think about the importance of language, which so much of what we are talking about in this series, and is what I think about in evaluation and in facilitation, words are everything. We get confused about evaluation question, is it the question that guides this evaluation, or here are the questions we’re hoping some visitor, some colleague will evaluate our efforts around?

I like this use of key inquiry questions or something bigger. Big questions is just a more general phrase that I often use too. What are your big picture questions? Why do you think evaluation is important? Why do you think this project is important? What are the things we’re trying to ask and look into?

Marley Steele-Inama:

Hey, Sarah, can I ask you a question?

Sarah Cohn:

Yeah.

Marley Steele-Inama:

What would be an example of what a key inquiry question could look like?

Sarah Cohn:

As we were thinking about all of this, I love the fact that you and I could think about really different kinds of examples. I’m going to take a path that might feel a little tangential to evaluation, but to you and me feels like really core to it. I am planning a full day of retreat with a museum here in the Twin Cities. One of the things that we’re wondering about is what are the big picture questions we need the whole organizational team to grapple with?

If we’re thinking about strategic planning, organizational culture change, what are some of the big questions that we have that will then help us frame the breakout discussions, and the small group discussions? Some of the questions I’ve been thinking about are, what’s our vision for where this museum is headed? What’s the culture that we want to be begin practicing and embodying? Then, the nature of the small group discussions are going to be just a tiny piece of that. For me, that’s one that I’ve been thinking a lot about. What about for you?

Marley Steele-Inama:

I probably would use more of an example from a project or a program. Last summer we opened a new hospital, an animal hospital that has a visitor experience component to it. An evaluation question that came out of our constituent group that was defining what these key inquiry questions were going to be, one of the questions that came up was, they’re really curious about what’s the difference in the guest experience when there is a procedure that’s happening and when there’s not a procedure happening?

What that allowed Nick and I to do was to think about what methods we would use to help answer that question, and that allowed us to then consider getting feedback from guests with a post experience survey, as well as observations. We made sure that we were able to discriminate between the data that, when there was a life procedure and when there wasn’t, but it was facilitated by one of our educators. There were some unique differences that came out. That would be an example that I would use.

Sarah Cohn:

Nice. One of the things that I know comes up sometimes is when we start having these brainstorming conversations or say, we enter into a conversation with the team and they’re like, “Okay, here are the three goals we have for our program. Here are some of the potential stuff around learning, stuff around experience that we’re hoping that people will take away from this.” Now, because of that, we have seven different key inquiry questions.

Sometimes it feels like we can’t answer all of them. One of the things that I think about with this cycle is that, it is still building on itself. Every time we learn something and start to apply it, probably more questions come up for us, or we say, “Okay, that now actually makes this other question that we didn’t try to tackle in this first round, much more important,” before we put the finishing touches on this experience for people.

One of the things that I found really helpful with teams is on slide five is what we call this questions worksheet. It’s really small here on my screen, but you can download it. We can also put the download link it to the chat. This worksheet is actually a word document, so you can use it and fill it out. What I found really interesting about this is, this worksheet both helps us identify, are we thinking about a question that actually is specific for me to ask a visitor? Now that you’re done with the program, what was your favorite thing? That’s not a big picture question. That’s not one of these key evaluation inquiry questions, it’s a more focused one, but instead, what are people learning? What’s changing the experience of someone in that new space that you were just talking about, Marley?

It includes these different questions to help us reflect on essentially, are these inquiry questions critically important for us to answer right now, or sometime before the end of the project? Do we have the time and other resources to be able to gather the information we might need and really, what kind of information would we be looking for? Is it information from visitors? Is it from the box office? Is it from some kind of time-to-entry kind of thing? Is somebody else down the hall in accounting? Where might that information live to help us answer the question?

Then, I think really most importantly, because we’re really thinking about this, even in summative phase where we’re reporting back to others, I always try to think about, how can we use this moment to help us move forward in our work. The second to last column has, what changes might you be able to make if you answered this question-

Marley Steele-Inama:

That’s so important to have this conversation, because why would you spend the time on collecting the data if you’re not going to use it?

Sarah Cohn:

Yeah, exactly. Yeah. Then, you and your team, or you by yourself can think through what are all these big picture questions now? How do we now, as I reflect on and answer all these questions about my inquiry question, what’s the level of priority for each one? You can then hone in on one, two, three questions that are going to guide this round of evaluation, right?

Marley Steele-Inama:

Yeah. Great resource.

Sarah Cohn:

Awesome. Maybe I’ll just slide to the next one, because it feels like a good segue, on slide six is another worksheet, which warning, it looks exactly the same. We became a little less creative. Liz Coleman, who I see on here was a co-author with me and Scott Patterson, who was previously at OMSI. We can attest that we were not the graphics people. We just know how to build tables and ask questions. That’s really what’s reflected here.

But this can really help us now think about, okay, we know what the big goals are for this project, we have identified all of the big questions that we have and we’ve honed in on one or two. Now, how do we get to figuring out the methods? You could do this worksheet for each inquiry question, or you could do it for all of them, depending on how interconnected we think those two questions are.

This would help us really think about what kind of data collections might be useful. Within the previously created worksheet, it has those really standard methods already listed, but it’s a Word document, so, again, you can go in and erase those and just leave those method options open. Again, this gets us to reflect on, okay, if we do surveys, which I’m super comfortable with, so obviously we should do it, and it’s really easy to build a couple of polls on Zoom or send out an online survey. Let’s think a little bit more about what could we get with this and what would we miss?

Every single data collection method has its strengths and has its limitations. This really gets us thinking about, if I did a survey, what would I not know? Then also, again, what would the lift be? What are the resources you need to do this, to be able to use each data collection method really well, and what else might be more useful?

Marley Steele-Inama:

I think the very first time when I was in my first evaluation role and I was asked to run some focus groups and I got really excited about it. That was when I realized how intensive focus groups are. The time that goes into those. Sometimes it is, it’s actually trying some of these methods and finding it’s another one of those variables, one of those inputs into it of, what’s it going to take to actually facilitate it? It doesn’t mean to always just fall into what’s easy and what you know how to do, because, like you said, it may not be the right tool to use the right way, the right method.

Sarah Cohn:

Yeah. One of the things that I’ve been thinking a lot about with methods more recently is not only what information can I get, but what kind of experience does each method help lend itself to? Focus groups are definitely time intensive and the stress of getting the right group of people together to really build on each other’s ideas and doing all of the logistics work to get up to that. But then, the fact that the group of people is the right group of people to be having a really generative conversation, and I, on behalf of my institution are developing these relationships with individuals, families, community partners.

I think a lot about that as well. I look seeing on the slide six on the bottom, sticky notes and other artifacts, sometimes I think a lot about what’s the experience that we’re allowing them to have, if they were in a program and now they’re coming out of it, and I’m going to gather data, how can I match that experience? How can we build on whatever was created there?

Marley Steele-Inama:

It’s part of the learning experience. It’s not tangential.

Sarah Cohn:

Exactly. Right. We are a part of representing and creating that sense of our organization through this work. Do you want to talk about what it’s looked like to pull all this together in-

Marley Steele-Inama:

Yeah. On slide seven is just a screenshot of a really pretty simple evaluation plan that a few of us put together for a project that we’re working on. It’s a partnership with Denver Zoo and then the zoo that’s in Sarah’s backyard, which is Como Park Zoo and Conservatory in Saint Paul. It’s a project where we’re working on improving our… Well, it’s a lot of things, but really about improving the resources we have and the training we offer around program development, implementation for empathy.

It’s a real finite project, and we landed on three, you’ll see evaluation questions on here, but those are those inquiry questions. We landed on three of them. The first one, if you can read it, I’ll read it out loud. It says, how does the enhanced roadmap… Roadmap is a process and some resources we have for program development. How does the enhanced roadmap impact Como and Denver’s usability to operationalize empathy, best practices and build capacity to foster empathy for wildlife?

That’s a mouthful, but really what that question is really trying to get at is, how are the changes that we’re going to make in this process and this resource going to impact our ability to facilitate for empathy, for wildlife? We then had to think about, okay, well, who’s going to answer that question for us or what will answer that question?

We’re utilizing the project leads on that. There are going to be the input of the data on that. It’s a lot of reflection, it’s their perceptions. That’s going to be the source. The indicators that the evaluator will look at is, to what extent are these project leads describing improvements or strengths or challenges presented in operationalizing these practices, these changes that we’re putting into place?

Then that makes us think about, okay, what’s the right method to collect that information? We agreed that a group interview or multiple group interviews would really do that. I think where we’ve landed organically with that method is we do monthly check-ins as a whole team, and those conversations are just happening monthly. Those group interviews are happening a little bit more organically in these meetings.

Then you can see that last column is we identified, what’s the use? It’s so important. How are we going to actually use the information from that? That’s just one of the examples of the three evaluation questions that we came up with in our evaluation plan. The reason it looks laid out like this is because we submitted it as part of a grant. We wanted the plan to look organized and pretty, for lack of better words.

Sarah Cohn:

Looking at this, I imagine that you all have your own internal ways of asking and pulling this information together that probably doesn’t automatically use those question and method worksheets, and yet a number of those key questions on those worksheets are reflected here in different columns.

Marley Steele-Inama:

Yeah. You’ll see that second evaluation question is really geared at, how does this enhanced process and resources, how do they strengthen the ability for our staff and volunteer facilitators to do this work? Now, our source is the people that are the facilitators. For Como, it’s volunteers, with Denver Zoo, it’s our staff.

This is really going to be based on training, the training we offer and the impact of that training. The training is more than just a one time training. There’s going to be mentoring throughout the training. Now, we’ve looked at methods such as a retrospective pre-post. Really, all that is, it’s a post-survey that’s given after training, where they reflect on where they were before the training, and then they think about where they are now, and you can look at the difference of that.

We also plan to do a post-experience survey, but we’re talking about, is survey the right thing or do we want to do some other feedback mechanism? But when I say post experience, it’s after they’ve facilitated through the summer months and then having them reflect on their growth over the summer. Then the implementation observations, that’s going to be us out on ground watching the facilitators and we’re going to have a checklist and a scoring rating of the extent to which they appear to be confident, they’re demonstrating certain behaviors that we’ve identified. You’ll see that, just to answer that one question, we have three different methods.

Sarah Cohn:

I love this, as an example, and I love that you were willing to share this and others, as we talk through this all. As we think about really… We got almost 20 minutes left, let’s just dive into methods. Before folks jump to the next slide, you’re totally free to, you have your own agency and autonomy, but we’d love to have a chat conversation about what data collection methods we’ve used that are not these three?

We keep pointing out the three that are most common; surveys, interviews, observations. Interview could be a group interview, focus group, individual, what have you. But what are some methods that you all have used or experienced that are not those? Would love to read people’s thoughts in chat.

One of my favorites lately have been whether it’s concept mapping or just different kinds of drawings, I’ve been doing it, work with middle schoolers in an afterschool program and asking them to draw things at the beginning of the start of the program and at the end of the school year or whatnot around whatever they’re doing.

Marley Steele-Inama:

A method that I learned about years ago at the American Evaluation Association Conference is called round robin interviews, where you basically take, let’s just say five people. It’s like speed dating. You have five people on one, half, five on the other, they’re sitting across from each other and you have five interview questions. Each person is asking the same question of all five people.

It’s hard to describe without going through it, but once it’s all gone through, you have, then two people who’ve asked the same question of everyone in that group of 10, they get together and then analyze the data together. It’s super participatory. They’re actually the ones doing the data collection.

Sarah Cohn:

That’s awesome.

Marley Steele-Inama:

Yeah.

Sarah Cohn:

That’s really interesting.

Marley Steele-Inama:

Some great stuff come into the chat.

Sarah Cohn:

Web analytics, visit diaries are prompting visits and asking people to do specific things. Focus groups are amazing. Photography, asking people to take pictures about particular things as they move through their visit. Those are really fun.

Marley Steele-Inama:

Liz, we might have to rope you into a future webinar to present about that. Because I know photo diaries, it’s a big effort, but some really cool data comment. Social media, for sure. Absolutely. There’s a company that we use, some of you may use as well, it’s called [inaudible 00:37:35] and it takes all of your social media content and does scoring and ratings based on it, and it gives you some ideas of what people are then talking about your organization on social media.

I wouldn’t rely on it as your only guest experience feedback, but it’s at least keeping you aware what’s happening on social media. Happy or not. Yeah. Okay. Awesome. Great stuff. Feel free to keep-

Sarah Cohn:

This is great.

Marley Steele-Inama:

… stuff in there. But Sarah’s done the hard work for everyone on slide eight. A lot of great ideas there.

Sarah Cohn:

Yeah. Slide eight is just a list, but if you click on the blue link, the bottom, I think I kept it as a spreadsheet. So, apologies if you don’t like Excel. I know some people don’t. I imagine many of us do though, thinking about data. But that spreadsheet really talks through, what are each of these items and when might you use it, when might you apply and collect the data? I think there’s some other information in there. Of course I didn’t have it open myself.

Marley Steele-Inama:

While you’re doing that, there’s a really interesting question in here from Sabrina Jones. Sabrina, I don’t know if you’re willing to come on mic, asking about measuring value. The thing they’re struggling with, they’re leaning into implementing matrix and lean principles in their growth strategy. Struggling with methods for valuing value. I think maybe that’s maybe I where I would need some more clarity on what, what is meant by value?

Sabrina Jones:

Yes. Hi, everyone.

Marley Steele-Inama:

Hi.

Sabrina Jones:

I work at the Mariner’s Museum and Park in Newport News, Virginia, and we’ve been essentially trying to manage our growth. It’s been exponential and it’s all over the place. There’s a need for some methods to make sure we’re going through the evaluation process, how we build and design a product, how we evaluate it at the end and whether or not to pivot, get rid of it or a growth strategy.

My question was around, we have principles, core principles that are fundamental to our mission statement, and those are around conservation, access, value and research. Of that, it’s easy for us to measure how well we’re doing in conservation or access. Do people have access to the museum? Is it usable or friendly for school groups to summer camps and things like that. But value is a very intangible item to measure when you’re talking about how people interact with an exhibit or interact with a park.

In order to measure value, it’s like, how do you extrapolate that from the methods that you guys are talking about today? I think they could all be valuable, but I’m wondering if any of you have identified any particular methods that help identify or evaluate something as intangible as value in whether or not people feel connected to the mission or the stories that you’re sharing within your collection?

Sarah Cohn:

This is a great question, and I do feel like there are folks on the call who are not Marley and me, who might have thoughts, so please do weigh in the chat. When you’re thinking about value right now, are you thinking about it or seeking to evaluate discreet experiences or is it the value of the Mariner Museum overall, and does this experience match with your sense of what the museum is and its value to you and the community?

Sabrina Jones:

Yes. The value is more related to the mission overall. The mission, in and of itself is around building social capital. Are all of the experiences and programs that we are designing, provide value based on that overall mission? I think it’s, like you said, I think it’s a little bit of both. The goal is, the value of the mission and what we’re trying to do, but we also want to make sure the experiences and the programs are aligned.

Marley Steele-Inama:

Do you do any sort of guest experience survey?

Sabrina Jones:

We used to, it hasn’t been done in a couple of years, and it was a very long survey. Most people never completed it. It was digital, as they were leaving the door and there was incentives for… There’s a coupon or something at the end, if you completed and taken into the gift shop. But it gathered data based on just rudimentary marketing statistics, and it wasn’t really anything tied to the mission at the time.

Marley Steele-Inama:

You have an opportunity to develop something that’s really meaningful for the organization. I know that it takes budget, it takes resources to do that there. But it seems like you have an opportunity to create some measures for a survey that will allow you to gather some baseline data on just what that current perception is around, a lot of that social capital, what could be some items around that.

You saying that, Sabrina, that gives us ideas for what future webinars could be about. How can we really do some more finite… Two weeks ago, a lot of questions came up around, how do we measure behavior change? We can talk all day long about what certain measures could look like for measuring social capital. They exist. Asking questions around that connection to mission, belonging, togetherness, there’s all kinds of great things.

We’re part of a larger project with the Museum of Science and Industry in Chicago, that’s measuring one thing, and it’s sense of belonging. There’s dozens of questions on the survey, all about sense of belonging. We could go down lots of rabbit holes. We could talk offline too, about ways to support you with that. Getting that info from people is going to be important.

Sarah Cohn:

I think about when we’re starting, when I’m starting, what might be an ongoing project, whether it’s an exit survey or something else. Thinking about having some of those one-on-one, will you sit down with me for a while conversations to really see what comes up for people, particularly around these ideas that have multiple abstract, amorphous definitions or understandings that people might hold. Like thinking about, are there ways to sit down with a number of different types of community members, visitors, organizational members, and say, essentially, what comes up for you as we talk about what it means to develop social capital, what it means for this institution and these various experiences we’re creating is offering value to you or to others?

From there, thinking about, are there some of these other methods that might be able to be used in a more discrete, in terms of time bounded way that you could do in terms of tracking, how are things going over time?

I’m thinking, Marley, about whether it might make sense for you to talk about [inaudible 00:45:40] a little bit and some of the really different ways that everything we’ve been talking about came together in that one project?

Marley Steele-Inama:

Yeah. Well, I wanted to share this project, [inaudible 00:45:51] Sarah is, slides nine and 10 in the slide deck is, we piloted a new program this past fall, what they call as a micro school. It’s an individual who has this vision of creating a middle school that doesn’t actually have a school. They just travel and do six week, I think they call them salons, but they do these six week studies in the community. The community is their learning edifice.

They spend six weeks with us at Denver Zoo. We decided, let’s actually ask all the kids, their teachers and our staff who are facilitating this program with them, what do they want to learn about this project? Everything was really different, but a couple of key evaluation questions came out it or inquiry questions. Our team was really interested, and then the staff were really interested in their growth in understanding the concept of wildlife conservation. The kids had a lot of different other questions. We really found a few key questions we could hone in on.

Then we asked everyone in the program and this is what’s on slide 10 is, what methods should we use collectively to gather the data? It wasn’t just my colleague and me sitting in our offices thinking, well, we think this one would work well, and this one worked well. We asked them. I think what was super fascinating about this, is the results that we got from the students and from the adults were very different. There’s a little bit of commonality. We leaned into the one thing, which was the small group discussions, this commonality. But you’ll see that the kids really wanted to do written journal, but the adults wanted them to do a video journal, and that was not what we were expecting at all. We thought these kids would want to use their phones and make videos. It just wasn’t what they… Maybe it was pushing their expectations for what they could do too. It’ll be interesting to see how this evolves every year we do this.

But we use this information to help inform what we actually did, what methods we used. We used a variety of methods. The full report is on page nine. Thanks, it’s in the chat too. You can review the report and see a little bit more in detail about the methods we used and the results that came out of it. For example, you’ll see on there, students interviewing each other, came out fairly high with the kids and the adults were (beep) but we went with it and that’s where we did those round robin interviews.

We had the teachers interviewing the kids and the kids interviewing the teachers as well as our staff. It was built into the program. It didn’t feel like this extra thing. Then Nick would go visit them every Friday and have them do a sticky note voting around their experience and what they were learning. We really did, we just really integrated it into the whole project. The evaluation felt like it was part of the project and the kids were more bought into it because they’re the ones that helped design what the methods were going to be.

Sarah Cohn:

I love that you pulled them into the process too. It didn’t all have to just happen behind the scenes or be totally set before they show up to participate in the program. Bringing them in and then saying, yeah, this is a specific component of this program that you’re participating in is really great.

Marley Steele-Inama:

Yeah, and that they were collecting data as well about the program.

Sarah Cohn:

Really cool.

Marley Steele-Inama:

Yeah.

Sarah Cohn:

We have just a couple minutes left. If you have questions, other questions that you’d like to throw in the chat, please do. I think two things we wanted to make sure we covered is, one, use what you’ve got. Use the methods you have access to. Slide 11 is a really brief, here are Zoom functions that we might sometimes forget about. Everything from the poll question that Ann since shared out with us at the very beginning asking one or a couple. I’ll say that in Zoom, poll questions are single or multi-select, you can’t have people type in their answers. That always has to happen through chat.

Marley Steele-Inama:

You can save the chat.

Sarah Cohn:

Yep, you can save the chat. Then asking people to take notes when you send them into breakout rooms for things is completely reasonable and fine and getting them to sort of share back and say, what came up for you all? How is that different from the other group that just presented and having a conversation. Then reactions, we often use reactions just as fun, literal reactions to what people are saying, but using them as a way to get people to vote on things, to say yes or no, I can do this, I can take that on. Or even using, because you have access to, I think potentially have more emojis, sometimes, it doesn’t look like it’s set up here, but sometimes you can use more emojis. You can think about different ways that people can show their responses to different things.

Then, slide 12, we thought we’d actually do dot voting, which is sometimes fun. There are a lot more dots than there are us, because, as is the norm, people sign up for webinars and didn’t attend, but we love that you all are here. We would love to know what data collection methods we’d like CARE to discuss in future webinars.

If you would be willing to grab one of the gray dots and just slide it over and drop it on one of the blue boxes to choose your first vote, and then a second vote, you can use the aqua teal on the right hand side. If you are not able to see this slide or move the dots around, you can put your votes into chat. What’s your first vote, what’s your second vote?

Marley Steele-Inama:

Or if there is a data collection method you don’t see on here, put it in the chat too.

Sarah Cohn:

Yeah, totally.

Marley Steele-Inama:

Because that really the intent is, this is setting the foundation for it. You can spend weeks and weeks and weeks diving into these as individuals. We’ll do our best to do them in an hour.

Sarah Cohn:

Yeah.

Marley Steele-Inama:

Good, Sarah. They want it all.

Sarah Cohn:

Yay. Excellent. We’ll just do a plug. I know that Ann put the link to signing up for the third of these three webinars in the series in the chat earlier. But on April 28th, we’ll do the second half. Liz Coleman and Sheila [inaudible 00:53:13] both of whom I think are on here will be talking about how do we analyze data and then use it to apply it in our practice? We’ll finish that cycle for these discussions.

Marley Steele-Inama:

Real question, Claire asked what are carousel sheets? Carousel sheets, there’s a variety of ways that you can facilitate them. The way I’ve done them before, as a participant was, you each get basically a large piece of chart paper, and there may be a question on there, or you create a question, and you add ideas to it. Then when your group is done, you move it to the next group, or you could physically move around a room like a carousel, that way too.

Everybody gets a chance to interact with each of those questions. You can use sticky notes and they can stick them on the carousel sheets, the big chart sheets, or people could just use pens, markers and write on there as well. But then I think what’s a really important step, again, to make it really participatory is in that sheet, goes back to the original or a group and their job is to analyze the data on it and share it back out with a larger group. Great question.

Sarah Cohn:

Yeah. One of the cool things that happens when you do it as a carousel, if you have four or five around a room and people have an order in which they’re moving through. I start at number one, Marley starts at two, Alicia starts at three. We can even build off of each other’s ideas. If you use Post-it notes, sticky notes, I see that somebody wrote this Post-it and I’m going to add bad ideas to it, which makes for that richness of ideas.

Marley Steele-Inama:

Yeah. It’s great to use in any sort of strategic planning, gather as many ideas as you can, which all that is evaluation. We do evaluation every day. Every single one of us on this call is doing evaluation every day. Whether we call it that or not. Well, thank you everyone for hanging out with Sarah and me for the past hour.

Sarah Cohn:

Thanks so much for the conversation and the questions and we’ll get to value and changes in behavior hopefully in future discussions and then dig into some of these different methods, because we can talk all day about them. We’ll think about building those out. Have a wonderful rest of your day, afternoon, April, if you will. Thanks a lot.

Marley Steele-Inama:

Sonnet, if you’re so on, those are called round robin interviews. I’m going to put-[recording ceased]

Where can we find the slides described in this video? Would love to see those referenced alongside the presentation but I don’t see them linked anywhere – thanks!